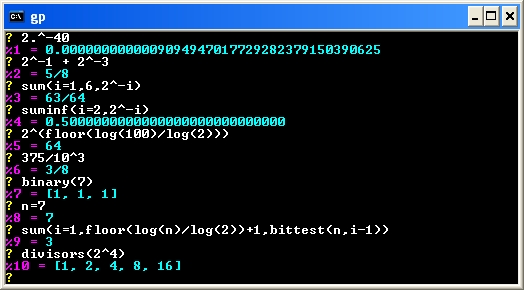

In my article “When Floats Don’t Behave Like Floats” I explained how calculations involving single-precision floating-point variables may be done, under the covers, in double or extended precision. This leads to anomalies in expected results, which I demonstrated with two C programs — compiled with Microsoft Visual C++ and run on a 32-bit Intel Core Duo processor.

In this article, I’ll do a similar analysis for double-precision floating-point variables, showing how similar anomalies arise when extended precision calculations are done. I modified my two example programs to use doubles instead of floats. Interestingly, the doubles version of program 2 does not exhibit the anomaly. I’ll explain.

.

.