An IEEE 754 binary floating-point number is a number that can be represented in normalized binary scientific notation. This is a number like 1.00000110001001001101111 x 2-10, which has two parts: a significand, which contains the significant digits of the number, and a power of two, which places the “floating” radix point. For this example, the power of two turns the shorthand 1.00000110001001001101111 x 2-10 into this ‘longhand’ binary representation: 0.000000000100000110001001001101111.

The significands of IEEE binary floating-point numbers have a limited number of bits, called the precision; single-precision has 24 bits, and double-precision has 53 bits. The range of power of two exponents is also limited: the exponents in single-precision range from -126 to 127; the exponents in double-precision range from -1022 to 1023. (The example above is a single-precision number.)

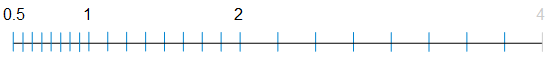

Limited precision makes binary floating-point numbers discontinuous; there are gaps between them. Precision determines the number of gaps, and precision and exponent together determine the size of the gaps. Gap size is the same between consecutive powers of two, but is different for every consecutive pair.

Continue reading “The Spacing of Binary Floating-Point Numbers”