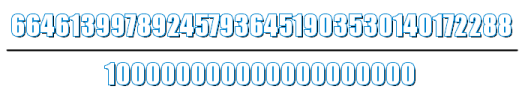

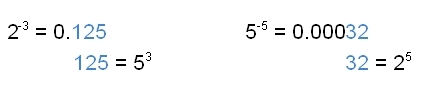

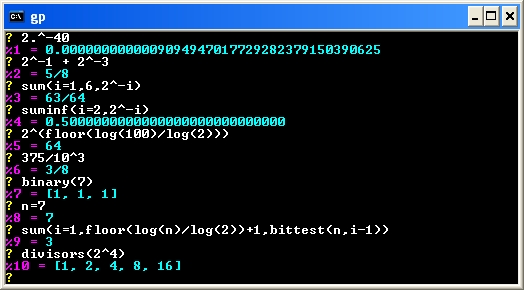

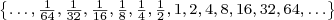

I’ve written about the formulas used to compute the number of decimal digits in a binary integer and the number of decimal digits in a binary fraction. In this article, I’ll use those formulas to determine the maximum number of digits required by the double-precision (double), single-precision (float), and quadruple-precision (quad) IEEE binary floating-point formats.

The maximum digit counts are useful if you want to print the full decimal value of a floating-point number (worst case format specifier and buffer size) or if you are writing or trying to understand a decimal to floating-point conversion routine (worst case number of input digits that must be converted).

Continue reading “Maximum Number of Decimal Digits In Binary Floating-Point Numbers”

.

.