In my previous exploration of double rounding errors in decimal to float conversions I showed two decimal numbers that experienced a double rounding error when converted to float (single-precision) through an intermediate double (double-precision). I generated the examples indirectly by setting bit combinations that forced the error, using their corresponding exact decimal representations. As a result, the decimal numbers were long (55 digits each). Mark Dickinson derived a much shorter 17 digit example, but I hadn’t contemplated how to generate even shorter numbers — or whether they existed at all — until Per Vognsen wrote me recently to ask.

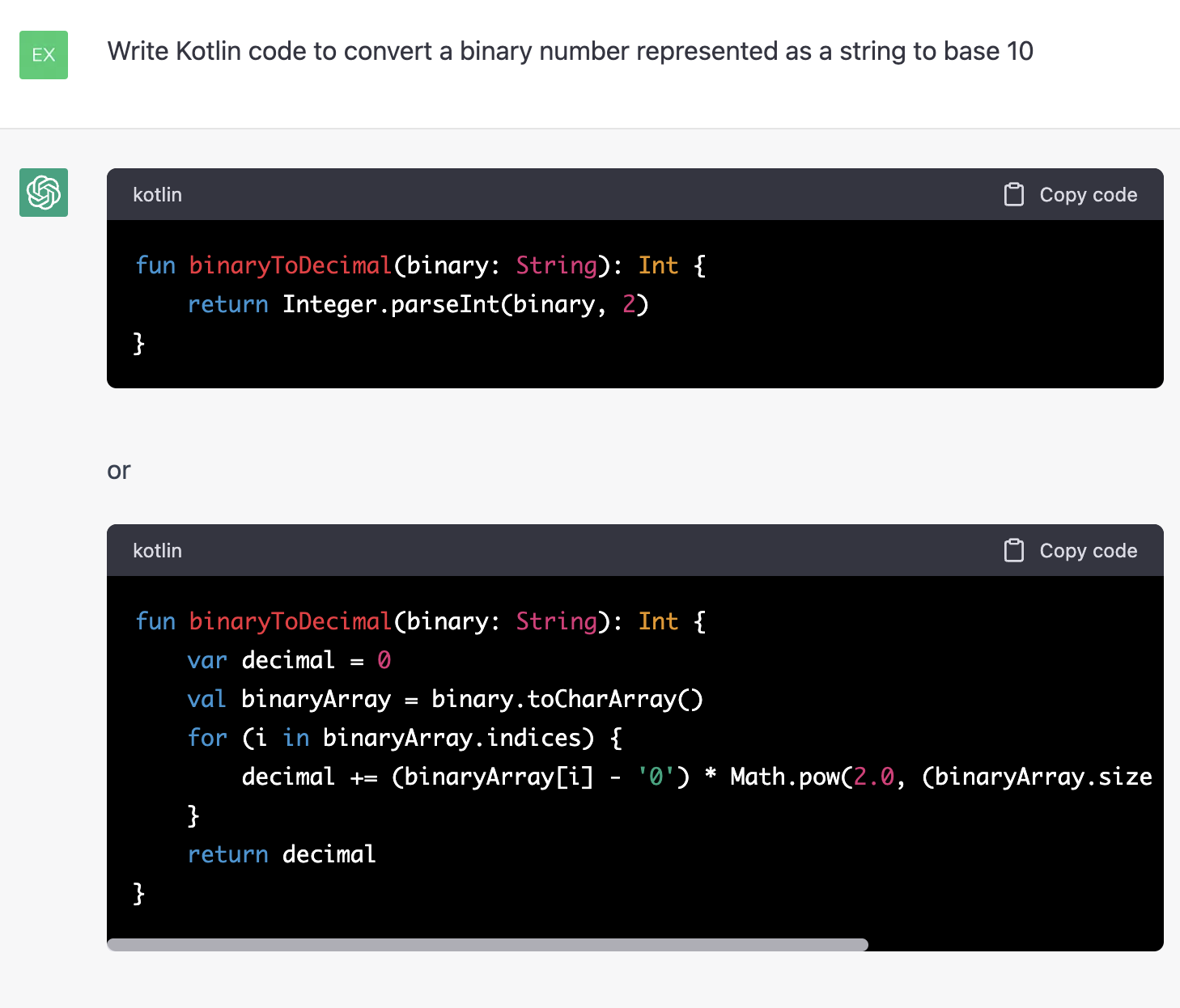

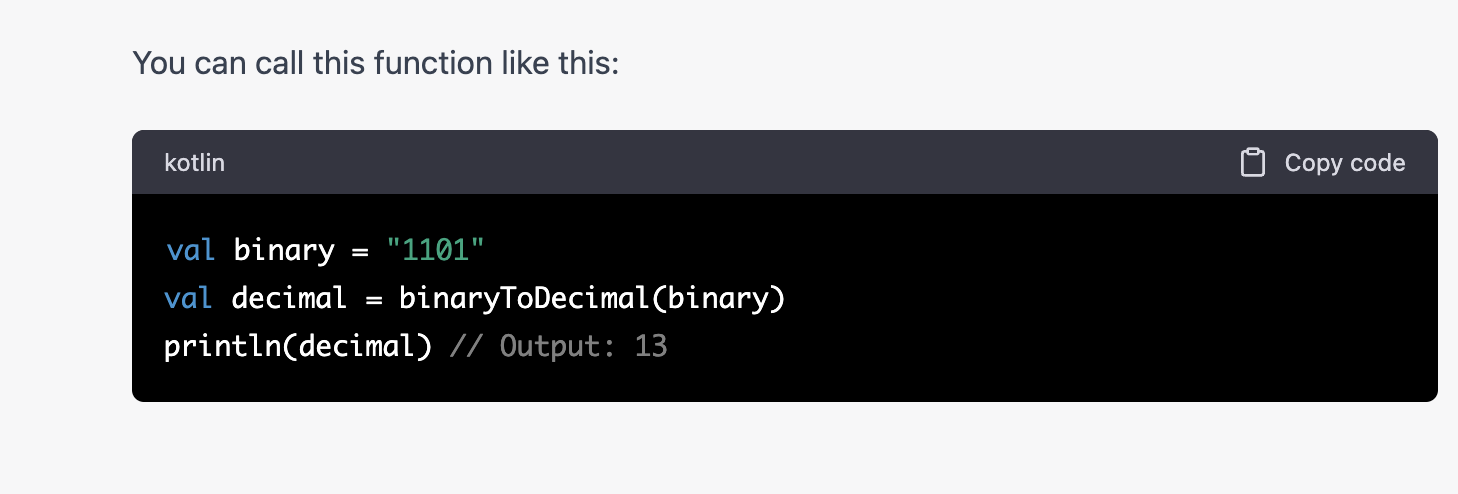

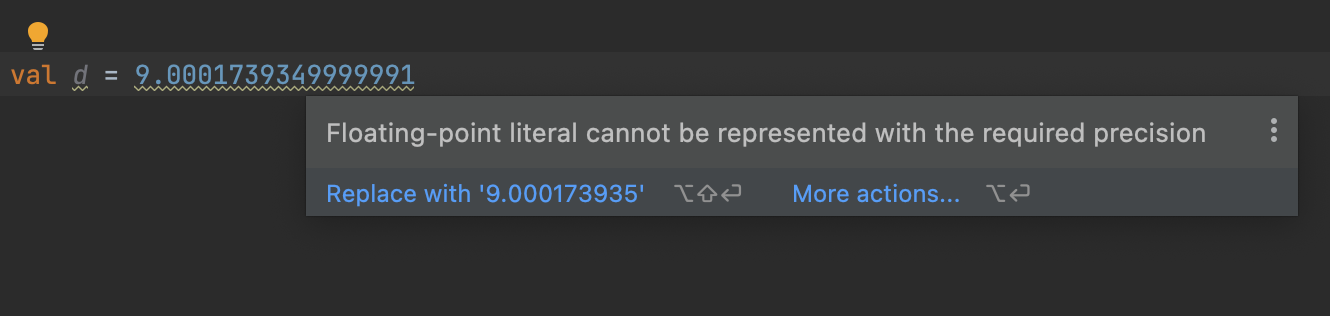

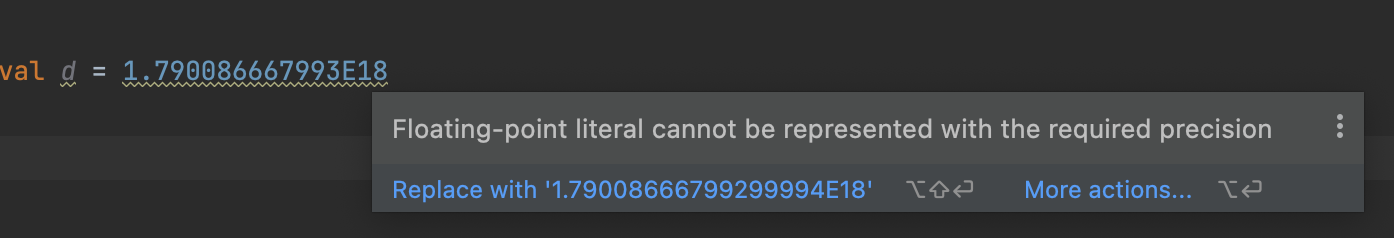

The easiest way for me to approach Per’s question was to search for examples, rather than try to find a way to construct them. As such, I wrote a simple Kotlin1 program to generate decimal strings and check them. I tested all float-range (including subnormal) decimal numbers of 9 digits or fewer, and tens of billions of random 10 to 17 digit float-range (normal only) numbers. I found example 7 to 17 digit numbers that, when converted to float through a double, suffer a double rounding error.

Continue reading “Double Rounding Errors in Decimal to Double to Float Conversions”