Pradeep Mutalik of The New York Times recently blogged about a puzzle that is an instance of the Josephus Problem. The problem, restated simply, is this: there are n people standing in a circle, of which you are one. Someone outside the circle goes around clockwise and repeatedly eliminates every other person in the circle, until one person — the winner — remains. Where should you stand so you become the winner?

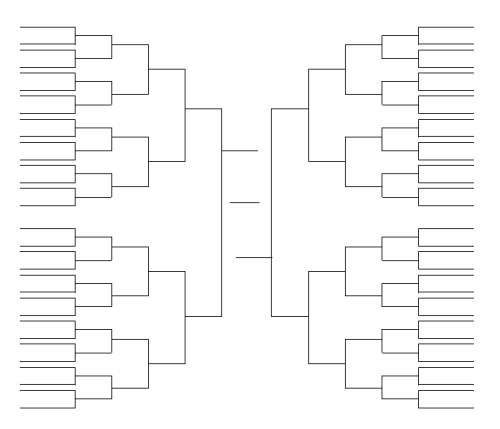

Here’s an example with 13 participants:

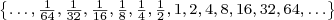

As Pradeep and his readers point out, there’s no need to work through the elimination process — a simple formula will give the answer. This formula, you won’t be surprised to hear, has connections to the powers of two and binary numbers. I will discuss my favorite solution, one based on the powers of two.

.

. or

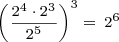

or  ? It depends on how you define it; there are several definitions from which you could choose. Let’s see if we can sort them out and propose a standard definition, or at least a standard definition for our use.

? It depends on how you define it; there are several definitions from which you could choose. Let’s see if we can sort them out and propose a standard definition, or at least a standard definition for our use. .

.